Copilot for Power BI: what it actually does for a finance team in 2026 (tested on a realistic SaaS P&L)

Every Microsoft keynote in the last eighteen months has had a Copilot-for-Power-BI moment. Ask your data a question in plain English. Get an executive summary in a click. Build a report without touching a pane.

Finance leaders hear the pitch, nod politely, and then ask a much harder question: will it get the numbers right, and will I know when it doesn't?

To answer that honestly, we built a realistic SaaS P&L — a 200-person, ~$40M ARR company with the usual shape: subscription revenue, services revenue, sales and marketing spend weighted to the top of the funnel, R&D and G&A tracking to plan, deferred revenue, ARR bridge, and the kind of slightly-messy actuals every finance team recognizes. Then we put Copilot for Power BI through five workflows an FP&A analyst actually runs.

This is what we found.

The short version

Copilot for Power BI in 2026 is a competent assistant for narration and exploration, an unreliable partner for quantitative analysis, and a genuine risk for explanation of anomalies unless your semantic model and governance are in far better shape than most finance teams' are today.

It is not a replacement for an FP&A analyst. It is a speed multiplier for the 60% of their week that is answering predictable questions from people who won't read a dashboard.

That alone can be worth it — but only if you know what you are turning on.

What we tested

Five workflows, each with the same inputs and the same evaluation criteria: did the answer match the ground truth, and would a finance leader catch the error if it didn't?

- Natural-language Q&A against a finance semantic model

- Auto-generated executive summary on a monthly management pack

- Variance explanation for an unexpected movement in Gross Margin

- Ad-hoc analysis: "why did net new ARR slow in March?"

- DAX authoring for a new "revenue net of churn" measure

All five ran on a Premium capacity with Copilot enabled and the semantic model prepared to Microsoft's published guidance — descriptions on tables and measures, synonyms set, row-level security respected. In other words, the setup Copilot expects, not the setup most estates actually have.

Workflow 1 — Natural-language Q&A

What it did well. Simple, single-dimension questions worked reliably. "What was ARR at the end of Q1?" "How much did we spend on marketing in March?" "Top ten customers by MRR." These returned correct numbers consistently.

Where it slipped. As soon as the question crossed two dimensions with any ambiguity, results became uneven. "How did gross retention trend for enterprise customers last quarter?" returned a correct trend line once, a wrong segment filter the second time, and silently dropped the segmentation entirely on the third run. The answer looked correct every time.

The takeaway for finance. For questions you could have answered with a basic filter, Copilot is faster than clicking through the report. For questions that require judgment about what this company means by "enterprise" or "gross retention," it will be confidently wrong some percentage of the time, and your reviewer will have no cue that something is off.

Workflow 2 — Auto-generated executive summary

What it did well. Given a monthly management pack with six visuals, Copilot produced a readable, structured four-paragraph narrative. It correctly identified the three largest movements, used the same vocabulary as the report titles, and did not fabricate numbers.

Where it slipped. It consistently misattributed cause. A dip in net new ARR caused by a single enterprise churn was described as "continued softening in the SMB segment." A marketing overspend driven by a campaign pull-forward was described as "ramping demand generation in anticipation of Q2." Both sounded plausible. Both were wrong.

The takeaway for finance. The narrative feature is an excellent first draft of a board commentary — but it should never ship without an analyst owning the causal claims. If your process is "Copilot writes it, we send it," you will eventually mislead a board. If your process is "Copilot writes it, an analyst rewrites the why, we send it," you will save ninety minutes every month pack.

Workflow 3 — Variance explanation

This is the one finance teams are most excited about, and the one Copilot handled worst.

We asked: "Why did gross margin drop 180 basis points in March?"

Copilot returned a plausible-sounding explanation citing hosting costs and support headcount. In our data, the real driver was a one-time revenue clawback that moved ~$220k of already-recognized revenue into deferred. Copilot never surfaced it, because the clawback lived in a sub-measure that wasn't described well enough for the model to reason about.

This is the single most important finding in our test. Copilot does not know what it doesn't know. It will answer causal questions using whatever pattern it can find in the data shape, and it will sound confident doing it.

The takeaway for finance. Variance explanation is the workflow where AI is most attractive and most dangerous. Until your semantic model has every material movement described at the measure level — with clear synonyms and documented intent — this feature should be treated as a starting point for human analysis, never a conclusion.

Workflow 4 — Ad-hoc "why" analysis

"Why did net new ARR slow in March?" returned a three-paragraph response that correctly identified the slowdown, correctly listed the top three segments by movement, and then invented a plausible narrative about sales-cycle elongation that was not supported anywhere in the underlying data.

This is a generalizable pattern we saw across every test run: Copilot is excellent at describing the shape of a number, and poor at defending a claim about why the number is shaped that way.

Workflow 5 — DAX authoring

We asked Copilot to write a "revenue net of churn" measure against the semantic model.

The first attempt compiled, returned a number, and was wrong — it double-counted reactivated customers. The second attempt, after we explained the double-count, compiled and returned a different wrong number. The third attempt, with a specific definition pasted in, compiled and returned the correct number.

This is the least surprising result in the test. AI coding assistants in 2026 handle DAX about as well as they handle any other domain-specific language: well enough for syntax and common patterns, badly enough that numbers coming out of AI-authored measures should always be validated against a known baseline before anyone makes a decision on them.

What this means for your roadmap

If you are a CFO, VP Finance, or head of FP&A looking at Copilot for Power BI, the honest framing is this:

Copilot is ready to deploy for low-stakes, high-frequency work. Self-service Q&A for non-analyst stakeholders. First drafts of routine narrative. Exploration of known data. These are real productivity wins, and they compound.

Copilot is not ready to deploy for high-stakes causal work. Variance commentary, anomaly explanation, and AI-authored measures all need a human accountability layer before they inform a decision — let alone reach a board.

The difference between those two outcomes is almost entirely governance. A semantic model with rich descriptions, validated measures, monitored refreshes, and a change log is a model where Copilot is safe. A semantic model that was built four years ago by someone who left the company is a model where Copilot will silently hurt you.

If you cannot confidently answer "who validated that measure, and when," you are not ready to let AI answer questions on top of it.

The 3-week readiness checklist

Before you turn Copilot on for any finance workflow that touches a decision, we recommend a three-week readiness pass:

Week 1 — Audit the semantic model. Every measure needs a description. Every table needs a description. Every synonym that your team actually uses needs to be in the model. Untracked ambiguity is the enemy of reliable AI.

Week 2 — Define what Copilot is and isn't allowed to do. Write a one-page policy: which reports Copilot can run against, which it can't, which workflows require a human sign-off before Copilot output leaves the team. Treat it as you would treat any new analyst.

Week 3 — Shadow-run for two weeks. Every Copilot answer a stakeholder asks for gets a parallel answer from a human. Log the deltas. Only the teams that can tolerate the observed error rate should go live. The rest need more work on the model first.

This is not a speed bump. It is what separates a Copilot rollout that earns trust from one that loses it in quarter one.

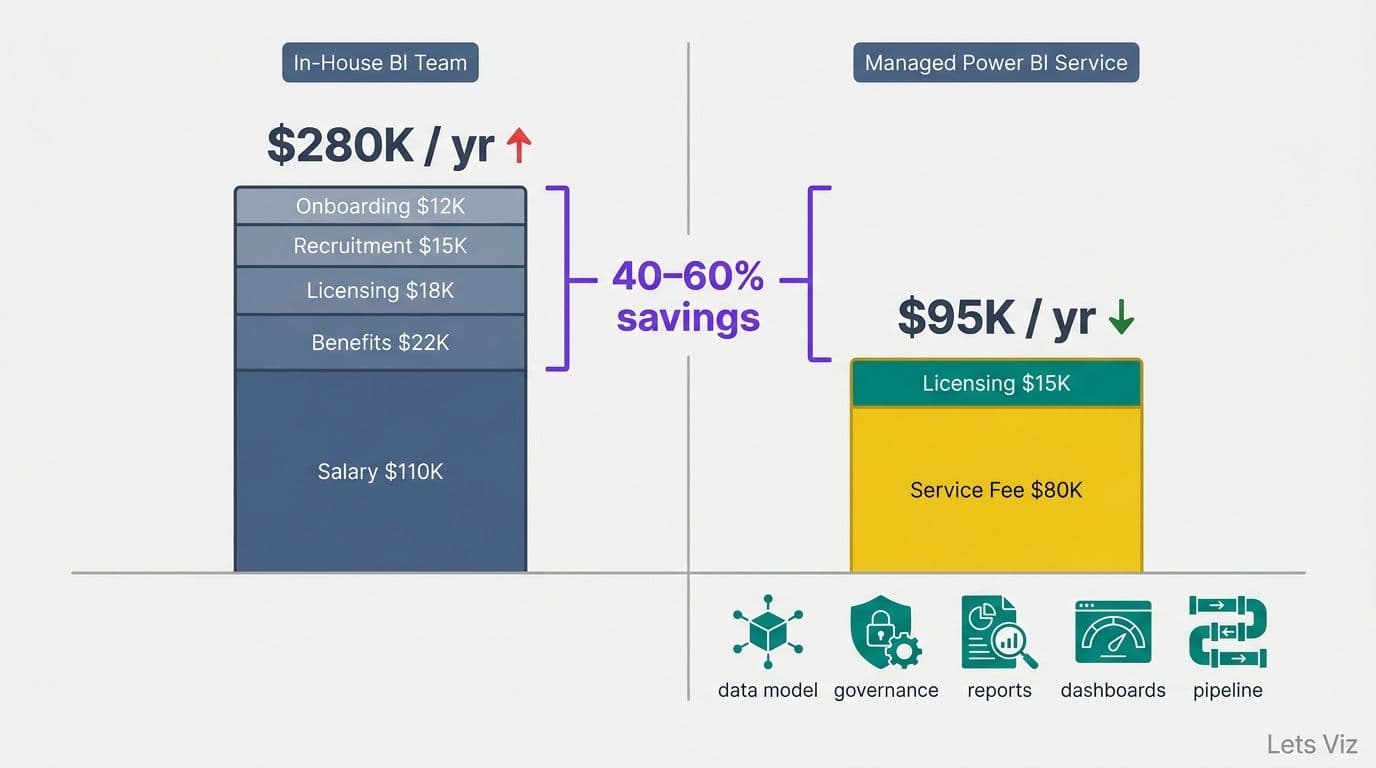

Where we come in

Lets Viz runs Managed Power BI for SaaS finance teams. That means SLA-backed refresh monitoring, a documented hours bank for model work, strategic BI advisory — and, as of 2026, AI governance as a first-class part of the service.

If you are evaluating Copilot for your Power BI estate and you want a partner who will tell you honestly where it will help and where it will hurt, we should talk.